|

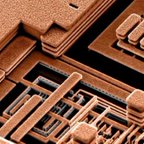

In a forecasting exercise, Gordon Earle Moore, co-founder of Intel, plotted data on the number of components—transistors, resistors, and capacitors—in chips made from 1959 to 1965. He saw an approximate straight line on log paper (see Figure 1). Extrapolating the line, he speculated that the number of components would grow from 26 in 1965 to 216 in 1975, doubling every year. His 1965–1975 forecast came true. In 1975, with more data, he revised the estimate of the doubling period to two years. In those days, doubling components also doubled chip speed because the greater number of components could perform more powerful operations and smaller circuits allowed faster clock speeds. Later, Moore's Intel colleague David House claimed the doubling time for speed should be taken as 18 months because of increasing clock speed, whereas Moore maintained that the doubling time for components was 24 months. But clock speed stabilized around 2000 because faster speeds caused more heat dissipation than chips could withstand. Since then, the faster speeds are achieved with multi-core chips at the same clock frequency

Read Article: cacm.acm.org/magazines/2017/1/211094-exponential-laws-of-computing-growth/fulltext wiggly, ravenous caterpillar — one that doesn’t limit its diet to naturally grown objects — can biodegrade plastic bags, a material infamous for the amount of time it takes to decompose, a new study finds.

Read Article: https://www.livescience.com/58810-caterpillar-biodegrades-plastic-bags.html What is the cloud? Where is the cloud? Are we in the cloud now? These are all questions you’ve probably heard or even asked yourself. The term “cloud computing” is everywhere.

In the simplest terms, cloud computing means storing and accessing data and programs over the Internet instead of your computer’s hard drive. The cloud is just a metaphor for the Internet. It goes back to the days of flowcharts and presentations that would represent the gigantic server-farm infrastructure of the Internet as nothing but a puffy, white cumulus cloud, accepting connections and doling out information as it floats. Read Article: https://www.pcmag.com/article2/0,2817,2372163,00.asp by Bradley Mitchell

Updated March 19, 2017The terms “information technology” and “IT” are widely used in business and the field of computing. People use the terms generically when referring to various kinds of computer-related work, which sometimes confuses their meaning. What Is Information Technology?A 1958 article in Harvard Business Review referred to information technology as consisting of three basic parts: computational data processing, decision support, and business software. This time period marked the beginning of IT as an officially defined area of business; in fact, this article probably coined the term. Over the ensuring decades, many corporations created so-called “IT departments” to manage the computer technologies related to their business. Whatever these departments worked on became the de facto definition of Information Technology, one that has evolved over time. Today, IT departments have responsibility in areas like

The future is now, or at least it is coming soon. Today's technological developments are looking very much like what once was the domain of science fiction. Maybe we don't have domed cities and flying cars, but we do have buildings that reach to the heavens, and drones that soon could deliver our packages. Who needs a flying car when the self-driving car -- though still on the ground -- is just down the road?

The media often notes the comparisons of technological advances to science fiction, and the go-to examples cited are often Star Trek, The Jetsons and various 1980s and 90s cyberpunk novels and similar dark fiction. In many cases, this is because many tech advances actually are fairly easy comparisons to what those works of fictions presented. On the other hand, they tend to be really lazy comparisons. Every advance in holographic technology should not immediately evoke Star Trek's holodeck, and every servant-styled robot should not immediately be compared to Rosie, the maid-robot in The Jetsons Read Article: http://www.technewsworld.com/story/84479.html We look back on some of the most important events in networking over the years and find out from the experts what the future of this sector is set to look like

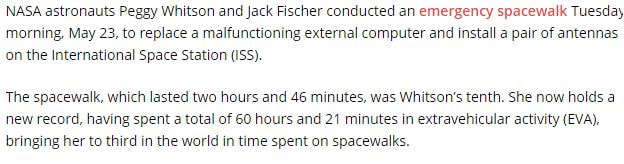

What started out as a collection of computers sending commands to one another, has evolved into a computing sector covering areas such as Network Attached Storage, Wi-Fi, the cloud and the burgeoning Internet of Things market. Here, we look back on some of the most important events in computer networking over the years and find out from the experts what the future of this sector is set to look like over the coming years… 1940 George Stibitz, who is internationally recognised as one of the fathers of the first modern digital computer, uses a teletype (an electromechanical typewriter that can be used to send and receive typed messages) to send commands to the Complex Number Computer in New York over telegraph lines. It was the first computing machine ever used remotely. 1964 American Airlines calls on IBM to implement the SABRE reservation system and online transaction processing is born. Using telephone lines, SABRE links 2,000 terminals in 65 cities to a pair of IBM 7090 computers and is able to deliver data on any flight in less than three seconds. Before the introduction of SABRE, the American Airlines’ system for booking flights was entirely manual. It consisted of a team of eight operators who sorted through a rotating file with cards for every flight. 1980s Access to the ARPANET is expanded in 1981. In 1982, the internet protocol suite (TCP/IP) is introduced as the standard networking protocol on the ARPANET. In the early 1980s the NSF funds the establishment for national supercomputing centers at several universities, and provides interconnectivity in 1986 with the NSFNET project, which also created network access to the supercomputer sites in the United States from research and education organisations. Commercial Internet service providers (ISPs) begin to emerge in the late 1980s. Read Entire Article: http://www.pcr-online.biz/news/read/a-brief-history-of-computer-networking/037899 Intel realizes there will be a post-Moore’s Law era and is already investing in technologies to drive computing beyond today’s PCs and servers.

The chipmaker is “investing heavily” in quantum and neuromorphic computing, said Brian Krzanich, CEO of Intel, during a question-and-answer session at the company’s investor day on Thursday. “We are investing in those edge type things that are way out there,” Krzanich said. To give an idea of how far out these technologies are, Krzanich said his daughter would perhaps be running the company by then. Read Article: http://www.pcworld.com/article/3168753/components-processors/intel-researches-tech-to-prepare-for-a-future-beyond-todays-pcs.html Edit Most of the time, when we talk about the potential impact of next-generation technologies on future computers, we’re talking about transistor performance. This makes sense — transistor scaling is what Moore’s law covers, and improving transistor density and design is what drove the “better, faster, cheaper,” mantra for nearly 40 years. But transistors aren’t the only area of CPU design that could benefit from dramatic improvements to underlying technology — and a team of researchers at Stanford believes it can address another critical problem that’s holding modern chips back, by building connective structures via copper and graphene combined rather than just copper.

Read Article: https://www.extremetech.com/computing/244693-graphene-coated-copper-dramatically-boost-future-cpu-performance |

About Oliver Briscoe

Oliver Briscoe is a 20+ year veteran of the Informational Technology field. He understands his first principals and loves teaching others. Archives

October 2017

Categories

All

|

RSS Feed

RSS Feed