|

We look back on some of the most important events in networking over the years and find out from the experts what the future of this sector is set to look like

What started out as a collection of computers sending commands to one another, has evolved into a computing sector covering areas such as Network Attached Storage, Wi-Fi, the cloud and the burgeoning Internet of Things market. Here, we look back on some of the most important events in computer networking over the years and find out from the experts what the future of this sector is set to look like over the coming years… 1940 George Stibitz, who is internationally recognised as one of the fathers of the first modern digital computer, uses a teletype (an electromechanical typewriter that can be used to send and receive typed messages) to send commands to the Complex Number Computer in New York over telegraph lines. It was the first computing machine ever used remotely. 1964 American Airlines calls on IBM to implement the SABRE reservation system and online transaction processing is born. Using telephone lines, SABRE links 2,000 terminals in 65 cities to a pair of IBM 7090 computers and is able to deliver data on any flight in less than three seconds. Before the introduction of SABRE, the American Airlines’ system for booking flights was entirely manual. It consisted of a team of eight operators who sorted through a rotating file with cards for every flight. 1980s Access to the ARPANET is expanded in 1981. In 1982, the internet protocol suite (TCP/IP) is introduced as the standard networking protocol on the ARPANET. In the early 1980s the NSF funds the establishment for national supercomputing centers at several universities, and provides interconnectivity in 1986 with the NSFNET project, which also created network access to the supercomputer sites in the United States from research and education organisations. Commercial Internet service providers (ISPs) begin to emerge in the late 1980s. Read Entire Article: http://www.pcr-online.biz/news/read/a-brief-history-of-computer-networking/037899 Intel realizes there will be a post-Moore’s Law era and is already investing in technologies to drive computing beyond today’s PCs and servers.

The chipmaker is “investing heavily” in quantum and neuromorphic computing, said Brian Krzanich, CEO of Intel, during a question-and-answer session at the company’s investor day on Thursday. “We are investing in those edge type things that are way out there,” Krzanich said. To give an idea of how far out these technologies are, Krzanich said his daughter would perhaps be running the company by then. Read Article: http://www.pcworld.com/article/3168753/components-processors/intel-researches-tech-to-prepare-for-a-future-beyond-todays-pcs.html Edit Most of the time, when we talk about the potential impact of next-generation technologies on future computers, we’re talking about transistor performance. This makes sense — transistor scaling is what Moore’s law covers, and improving transistor density and design is what drove the “better, faster, cheaper,” mantra for nearly 40 years. But transistors aren’t the only area of CPU design that could benefit from dramatic improvements to underlying technology — and a team of researchers at Stanford believes it can address another critical problem that’s holding modern chips back, by building connective structures via copper and graphene combined rather than just copper.

Read Article: https://www.extremetech.com/computing/244693-graphene-coated-copper-dramatically-boost-future-cpu-performance Google today is unveiling its second-generation Tensor Processor Unit, a cloud computing hardware and software system that underpins some of the company’s most ambitious and far-reaching technologies. CEO Sundar Pichai announced the news onstage at the keynote address of the company’s I/O developer conference this morning.

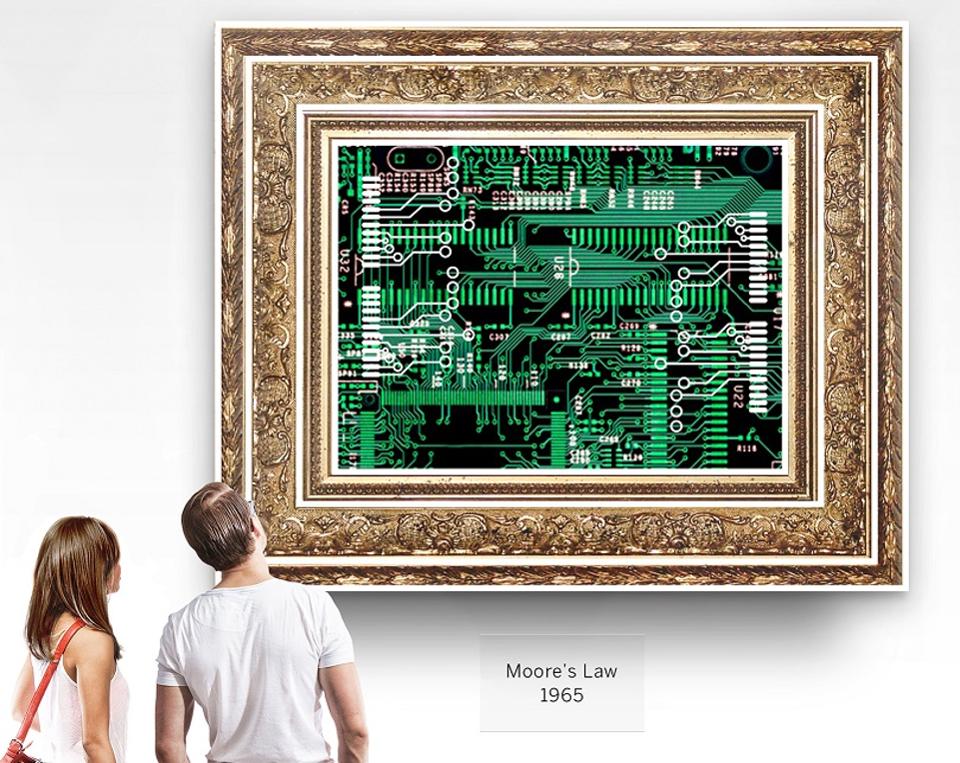

Read Entire Article: https://www.theverge.com/2017/5/17/15649628/google-tensor-processing-unit-tensorflow-ai-training-system Moore’s Law posits that the number of transistors on a microprocessor — and therefore their computing power — will double every two years. It’s held true since Gordon Moore came up with it in 1965, but its imminent end has been predicted for years. As long ago as 2000, the MIT Technology Review raised a warning about the limits of how small and fast silicon technology can get.

Read Entire Article: https://www.forbes.com/sites/sap/2016/09/12/7-surprising-innovations-for-the-future-of-computing/#753c7c541aed Your smartphone is millions of times more powerful than all of NASA’s combined computing in 19697/6/2017

That’s the year man first set foot on the moon. It’s really difficult to imagine the technical challenges of landing on the moon more than five decades ago if you’re not a rocket scientist, but what’s certain is that computers played a fundamental role – even back then. Despite the NASA computers were pitiful by today’s standards, they were proper enough to guide humans across 356,000 km of space from the Earth to the Moon and return them safely. In fact, during the first Apollo missions critical safety and propulsion mechanisms controlled by the software were used for the first time, which formed the basis for modern computing.

Read Entire Article: http://www.zmescience.com/research/technology/smartphone-power-compared-to-apollo-432/ Moore’s Law is one of the most durable technology forecasts ever made.10,20,31,33 It is the emblem of the information age, the relentless march of the computer chip enabling a technical, economic, and social revolution never before experienced by humanity.

Read Article: https://cacm.acm.org/magazines/2017/1/211094-exponential-laws-of-computing-growth/fulltext |

About Oliver Briscoe

Oliver Briscoe is a 20+ year veteran of the Informational Technology field. He understands his first principals and loves teaching others. Archives

October 2017

Categories

All

|

RSS Feed

RSS Feed